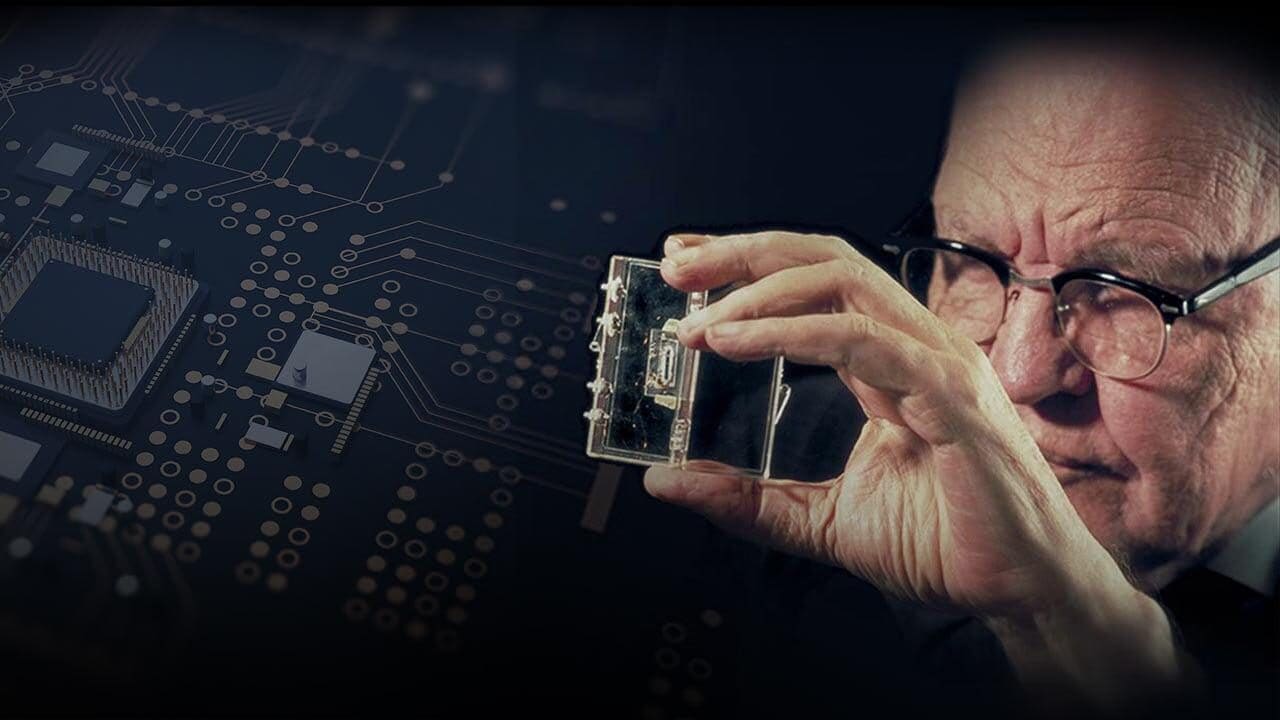

On September 12, 1958, one of the most important pages in the history of technology was written: Jack Kilby, a young American electronic engineer recently taken on Texas Instruments, showed for the first time the functioning of an integrated circuit. From that apparently simple experiment – an oscillator capable of generating a continuous sinusoidal signal – the microchip revolution took hold, the tiny components that today allow computers, smartphones, cars, satellite systems and anything else to work.

Kilby’s intuition was not born from nothing: it was the distillate of years of reflections on the problem of miniaturization of electronic devices, known among engineers as “tyranny of numbers”: the more the complexity of a circuit grew, the more it became difficult to connect thousands of components without errors or prohibitive costs. His idea, which he called “The Monolithic Idea”, consisted in creating transistors, resistors and capacitors on a single block of semiconductor material, thus avoiding the assembly piece by piece.

Despite initial skepticism, that discovery clearly changed the trajectory of science and industry. In the following paragraphs we will see as a Kansas boy, inspired by an ice storm and a father passionate about radio amateurs, came to conceive in all respects the heart of modern electronics, then sharing the authorship of the microchip with Robert Noyce, a future founder of the Intel semiconductors giant.

Who was Jack Kilby

Jack St. Clair Kilby was born in 1923 in Jefferson City, Missouri, but he grew to Great Bend, Kansas. His father managed a small electric company that provided energy to the rural areas of the state. While Jack was still a young high school clerk, in April 1938 an ice storm knocked down pints and telephone lines and it was in that circumstance that he saw his father collaborate with radio amateurs to restore contacts with isolated customers. That episode fascinated him to the point of directing his vocation: electronics would have been his field of study and, in all respects, his reason for life. After high school, he enrolled at the University of Illinois, graduating in electric engineering in 1947, just a year before the Bell Labs announced the transistor, the component destined to supplan the empty pipes.

In the early years of career Kilby worked in Milwaukee producing radio and television devices, at the same time attending an evening master. The turning point came in 1958, when it was hired by Texas Instruments in Dallas. There he was allowed to focus on the problem of miniaturization: instead of building circuits by connecting hundreds of separate transistors, he imagined to integrate all the elements on a single piece of Germanio, a semiconductor then in use before the silicon became the standard. On July 24 he noted the idea (from him “The Monolithic Idea”) on his notebook and a few weeks later, on September 12, he showed her in front of the company managers.

Almost simultaneously, Robert Noyce of the FaircHILD Semiconductor developed a similar solution, more easily produced in series. After years of legal disputes, the two companies decided to give themselves mutual licenses, effectively sanctioning the co-phaternity of the invention. From that moment, the integrated circuits began to be adopted in military and spatial applications, until NASA’s decision to use them in the Apollo missions: a recognition that consolidated its reliability.

Other precious contributions and the Nobel Prize for Physics

Kilby did not stop at the microchip. He contributed to the development of the portable calculator, the thermal printer and experienced silicon applications for the production of solar energy. He obtained more than 60 patents, became professor at Texas A&M University and received prestigious prizes, including the national medal of science and, in 2000, the Nobel Prize for Physics. He died in 2005 due to cancer, but his name is engraved next to that of Noyce in the history of electronics.