The summaries generated by Google’s artificial intelligence, the so-called AI Overviews, i.e. those blocks of text that usually appear at the top of search results and which automatically summarize the answer to a question, correctly answer approximately 91% of factual questions. This seems like a seemingly excellent result, but it hides a problem of gigantic scale. According to some estimates, something like more than 5 trillion searches are produced on Google per year. They’re not little motes. If around 10% of these searches return incorrect AI summaries, this means that tens of millions of responses could return incorrect information every hour. This is far from excellent.

This situation is illustrated by an analysis conducted by New York Times in collaboration with Oumi, a startup specializing in artificial intelligence, which tested Google’s system on thousands of concrete questions. The results show a slight improvement in the accuracy of the answers given, but at the same time also a new critical issue: the sources cited by the AI Overview are increasingly less reliable, making it more difficult for users to independently verify the information received.

AI Overview put to the test

The method used by Oumi to test AI Overviews is called SimpleQA. It is a benchmark – i.e. a standardized evaluation tool – developed by OpenAI and widely used in the sector to measure the factual accuracy of artificial intelligence systems. On a sample of 4,326 searches, the results showed a significant evolution: with Gemini 2 (the model in use in October 2024), the correct answers stopped at 85%. With the upgrade to Gemini 3 (tested in February 2025) the percentage rose to 91%.

The real problem, however, is not so much the answer itself but the sources that accompany it. Oumi found that a large portion of the correct answers detected in February were “unsubstantiated.” In other words, the links cited in support of the response did not actually confirm what was stated. In October this percentage was already worrying, reaching 37%, but in February it rose to 56%. In practice, Google provides a right answer in most cases, but the pages to which it links do not explain it, do not confirm it or even contradict it, and this in over half of the cases. Among the sources most cited by Gemini summaries are Facebook and Reddit, platforms that certainly do not guarantee the same reliability as a journalistic or scientific source.

The New York Times documented some of the most egregious errors made during the study. When asked when Bob Marley’s house became a museum, Google responded “1987” instead of the correct “1986”, with sources not confirming the date given. In a search on Yo-Yo Ma and the Classical Music Hall of Fame, the engine returned to the organization’s official website, claiming however that the musician was not a member. In another case, Dick Drago’s age at death was correct, but the date of death was incorrect. Isolated cases, certainly, but emblematic of a recurring pattern.

Even more relevant is an experiment conducted by a journalist from BBCwho specifically created an article with deliberately false information and published it online. In less than 24 hours, Google’s summary system had already absorbed and reproduced that erroneous news in its AI Overviews. This phenomenon, known as “data poisoning” (or data poisoning), shows how vulnerable automatic summary systems are to unreliable or manipulated content.

Google’s “double” response

Google’s response regarding the problems encountered by the study we just mentioned did not take long to arrive. The online search giant disputed both the methodology and conclusions of the study. Spokesman Ned Adriance called the report full of «serious shortcomings», underlining that SimpleQA is a benchmark developed by a direct competitor, OpenAI (the company that develops ChatGPT), and which itself contains some inaccuracies.

Google also noted that Oumi used its own AI systems to analyze Google’s AI-generated summaries, potentially introducing additional margin for error. Arguments that are not entirely unfounded, but which risk sounding paradoxical: claiming that a study on the imprecision of AI was conducted with tools that are also imprecise certainly does not strengthen confidence in the product being defended.

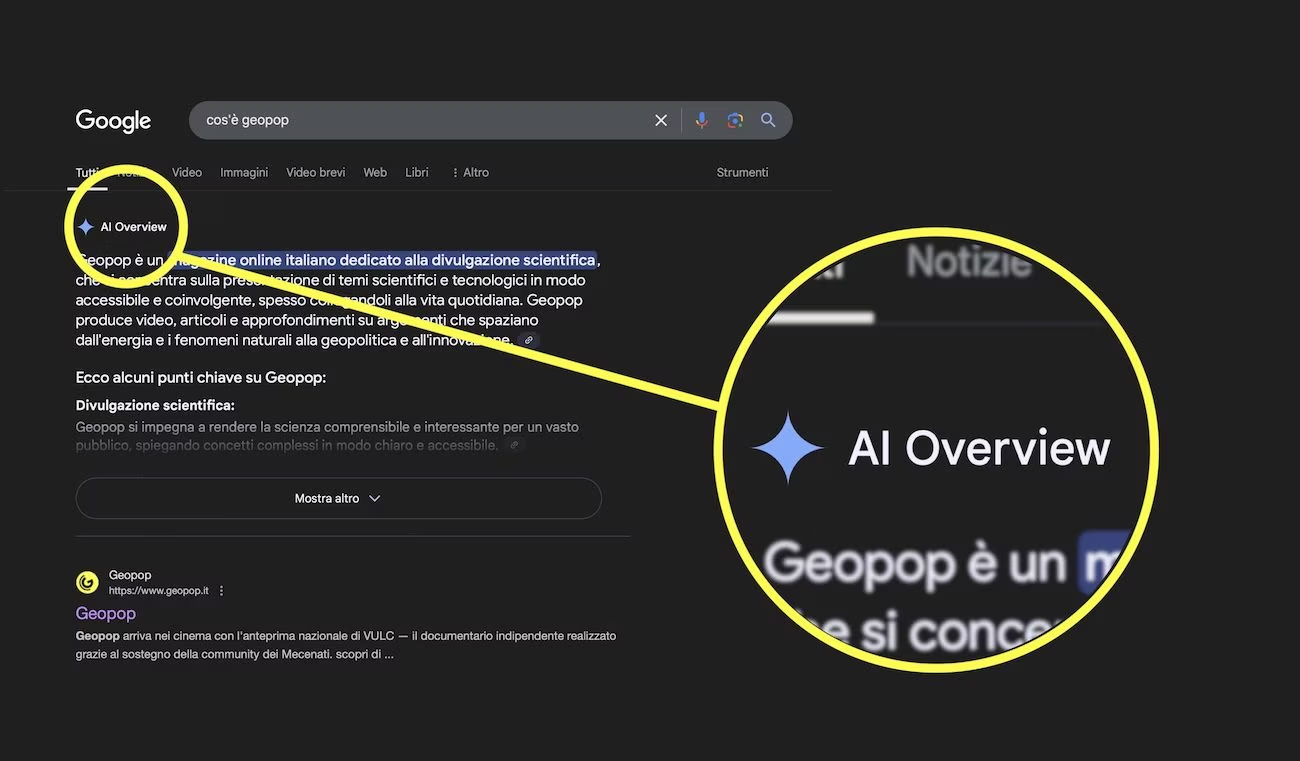

Speaking of this, we wondered if the “official” answer given by Google corresponds to the answer generated by its AI Overview. And do you know what we discovered? Asking Google «How accurate are the AI Overview responses», this is the answer we were given.

As you have just noticed, Google’s AI Overview itself admits to being accurate in 90% of cases, thus confirming the percentage that emerges from the study conducted by New York Times in collaboration with Oumi. This is paradoxical: if the study in question actually turns out to be inaccurate, then the answer given by Google’s AI Overview would also be inaccurate, demonstrating its influenceability given the sources it accesses on the Web.