For more than a decade, the research team led by Professor Chad Bouton at the Feinstein Institutes for Medical Research has been studying how to translate the brain activity of patients with motor paralysis into muscular commands that allow them to regain control of their bodies. Until now, they had only been able to work with patients who already had brain implants. Now the research is going further: with the “Human Avatar project”, the team is working on a project never tried before. They want to offer a rehabilitation path to a patient with spinal injuries without implanting any chip in her brain. To do so, they are experimenting with how to create a connection between his hand and the brain of Keith Thomas, a quadriplegic patient with a complete spinal injury who has a brain implant.

The team has been working with Thomas for almost three years, with whom they had already started another experimental procedure. Thanks to a combination of microchips, artificial intelligence and years of work, over the past two years Thomas has been able to move his arm, lift a cup and even grasp an eggshell without breaking it. The AI was trained not only to transform Thomas’ brain signals into movements, but also to integrate sensory feedback to enable more precise movements.

The goal now is to achieve the same results with Kathy Denapoli, a patient with an incomplete spinal injury, by creating a rehabilitation path in which Thomas’ brain signals guide Denapoli’s arm. This technology is highly experimental and under development, but it could give very interesting results, because it promotes collaboration between patients and increases their motivation.

Let’s see in more detail how this approach works and what the future prospects are.

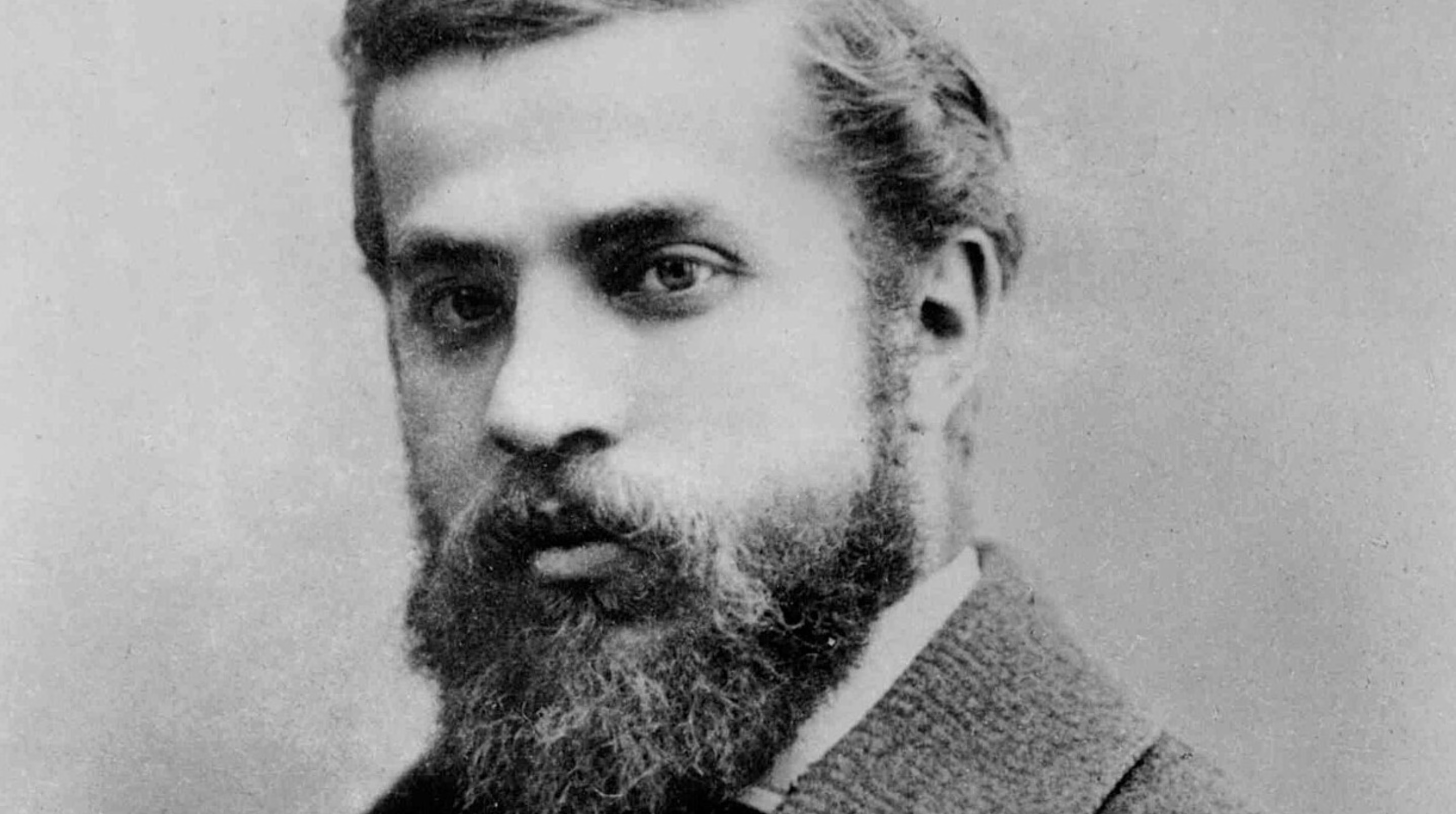

The case of Keith Thomas

In 2020, Keith Thomas, an American, suffered spinal cord injuries at the neck. The spinal cord is made up of millions of neurons, which transmit and process messages between the brain and the rest of the body, making movements and perceptions possible. When a person suffers a spinal cord injury, these messages are disrupted causing varying levels of paralysis. In Thomas’ case, this caused paralysis and loss of the sense of touch from the chest down.

Two years later, Thomas joined the Bouton team’s experimental project, with the aim of recovering, at least in part, movement and tactile perception. For an entire year, the team mapped the patient’s brain as he observed and tried to reproduce hand movements on a screen. This allowed us to identify exactly which areas were involved in movement and implant five microchips, each smaller than the tip of a little finger, in the brain areas responsible for movement (motor cortex) and touch (somatosensory cortex). The microchips contained a total of 224 electrodes capable of recording the electrical activity of individual neurons.

AI integrates motor and sensory signals

In August 2025 the team reported the entire procedure carried out so far. To put it simply, the researchers trained an AI model to “decode” signals from the motor cortex and use them to stimulate a series of electrodes on the patient’s neck and “bypass” the lesion. Other electrodes, applied to the forearm, directly stimulate the muscles responsible for hand movements.

To achieve real autonomy, however, movement alone is not enough, the sense of touch is also needed. To understand this, let’s think about everyday actions such as brushing our teeth, drinking from a glass or blowing our nose. For each of these actions it is not enough to “think about the movement”, we must also perceive the shape of the object to be held, the roughness, the density to understand how and how much to tighten, otherwise we risk hurting ourselves or making it fall. And this is why the team integrated a second channel, capable of collecting and interpreting tactile signals. To do this, it developed a neural network (one of the most common artificial intelligence algorithms) that manages a constant feedback loop throughout the movement: motor signals initiate the movement of the arm, while tactile signals regulate its intensity and precision in real time.

Through this process, over the course of two years, Thomas was able to drink from a cup on his own, wipe his face on his own, and hold an eggshell without breaking it. A gigantic achievement for a quadriplegic person.

Remote rehabilitation: the Human Avatar project

The research didn’t stop there. Bouton’s group is working to allow a “remote rehabilitation”, less invasive than the one being tested with Thomas. The idea is to create a “human avatar” in which a patient already equipped with a brain implant transmits signals to the body of another patient, allowing movement.

The first results of this research were pre-published in September 2025: Thomas was able to guide the movements of Kathy Denapoli, a 63-year-old woman with an incomplete spinal injury, allowing her to pour water from a bottle with great precision. For reasons of time, the pre-published article has not yet passed all the review stages necessary to consider the official results. The experiments, however, were recorded with the consent of the participants and can be found on Youtube.

In addition to the technical aspect, this approach has also had a positive impact on a human level. A sense of collaboration was created between the patients involved, which increased motivation in the rehabilitation process. The interviews highlighted a high level of personal satisfaction, suggesting that this type of “cooperative” rehabilitation could represent a new frontier for motor and sensory recovery even from less serious neurological pathologies, such as stroke or damage to peripheral nerves.

Of course, this is still an experimental practice, and further studies will be needed to evaluate its long-term effectiveness, but these results represent a concrete example of how AI can contribute to improving our quality of life.